gRPCurl is a command-line tool that allows you to query your gRPC servers, similarly to how you can query HTTP endpoints on your web server using curl. The implementation code for gRPCurl also now helps to power other dynamic gRPC tools, such as Uber’s Prototool.

The rest of this post tells the tale of how gRPCurl came to be. If you just want to know more about how to install and use it, jump on over to the GitHub repo.

(You can also take a look at my GopherCon 2018 talk, which introduces in more detail some of the Go libraries that were built in the process of creating gRPCurl.)

Fullstory and gRPC

At Fullstory, we have undertaken the task of moving all of our server-to-server communications to gRPC. In the beginning, much of the Fullstory app was a monolithic Google App Engine service. Several features were built that needed bespoke backends that could not run inside of the Google App Engine environment, such as Apache Solr. The early process of stitching together frontend and backend services used JSON and HTTP 1.1 (more specifically, Google App Engine’s URL Fetch service).

Knowing the power of RPC as a programming model, a custom RPC system was built. All of our server code is written in Go, so the first iteration only targeted Go as clients and servers. In fact, it eschewed an IDL, such as Protocol Buffers, and used “go/types” and related packages to examine Go source code. So RPC interfaces and data types were all defined in Go code.

This homegrown solution was created in January 2015. A few months later, the gRPC organization in GitHub would be created, and a little over a year after that, gRPC 1.0 would be released. gRPC solves the same problems more efficiently than JSON and HTTP 1.1, and it even supports streaming and full-duplex bidirectional communication. It also leverages Protocol Buffers (“protobufs” for short), a technology with which numerous Fullstory devs were already familiar. By using the protobuf IDL, which can target multiple languages, we can more easily share data structures between our Go code and our browser client code, which is written in TypeScript. Over the subsequent months and years, the gRPC project has become mature and stable, with an entire ecosystem sprouting up around it. This helped to cement our commitment to gRPC.

Enter Commander

One project quickly became the biggest user of gRPC (in terms of number of methods): a tool named Commander, which was an internal command-line tool, plus related server components, for performing various admin tasks. Its role was to supplant various web UIs, providing a new scheme for access control that would be stronger and more flexible than what was used in the older web-UI-based admin tools.

A key component of Commander’s architecture was a gRPC server, named Commandant, that acted as Commander’s API server, interacting with Google Cloud Project resources and communicating with other Fullstory backends. Commandant was the workhorse. Much of the functionality in Commander amounted to creating an RPC request from command-line arguments, sending the request to Commandant, and then formatting the results. Each command in Commander generally mapped to a single RPC endpoint in Commandant. So adding a new command was very mechanical and involved a good bit of boilerplate.

One of our watchwords at Fullstory is “bionics”, and the process to add a command to Commander did not feel very bionic. The ideal “bionic” Commander client would be totally dynamic, not requiring any new client-side code to add a new action:

Commander could query the Commandant server for the list of supported endpoints.

For each endpoint, it would create a new command-line action. For each of these actions, it would create command-line options for each request field. Once all command-line actions were constructed, the actual command-line arguments could be parsed.

When an action is invoked, the command-line options would be packaged into a request and sent to the server, to the action’s corresponding RPC method.

Finally, it would pretty-print the resulting RPC response.

However, the normal flow with protobufs and gRPC is to invoke the protoc command-line tool, which takes in source files in the protobuf IDL and emits code of your choice (in Fullstory’s case, Go code). The normal flow would require re-compiling Commander every time the service interface for Commandant changed. That’s not very bionic at all.

Having used protobufs and protobuf-based RPC systems for quite some time, I knew of other tools to make it truly dynamic. In C++ and Java, there is rich support for protobuf descriptors and dynamic messages:

Descriptors are the root of reflection for protobufs. For each language element in the protobuf IDL, there is a type of descriptor that can be used to inspect that element. For example, a descriptor for a message provides information on the names, types, and tag numbers for all of that message’s fields. Similarly, a descriptor for a service provides information on the names and signatures of all of the service’s methods.

Dynamic messages can generically represent any kind of protobuf message. They are like maps of field tag numbers to values. Field descriptors are used to validate field values and make sure they're the right type. It turns out that a message’s field descriptors are all that are needed to encode such a map into the protobuf binary format. So a dynamic message can be marshaled and unmarshalled to bytes, or even to text or JSON.

These would be necessary building blocks for building a dynamic client in Commander. There was just one problem: the Go protobuf runtime library had very poor support for descriptors and zero support for dynamic messages.

Caveat: As of August 2018, significantly better support for reflection and descriptors is planned for an upcoming v2 of the protobuf runtime API for Go.

Protoreflect (Detour into Mexico)

Having used descriptors and dynamic messages extensively in Java, I knew a good bit about what would be involved to provide similar support in Go. So I started toying with the idea in a personal project on GitHub that I called protoreflect.

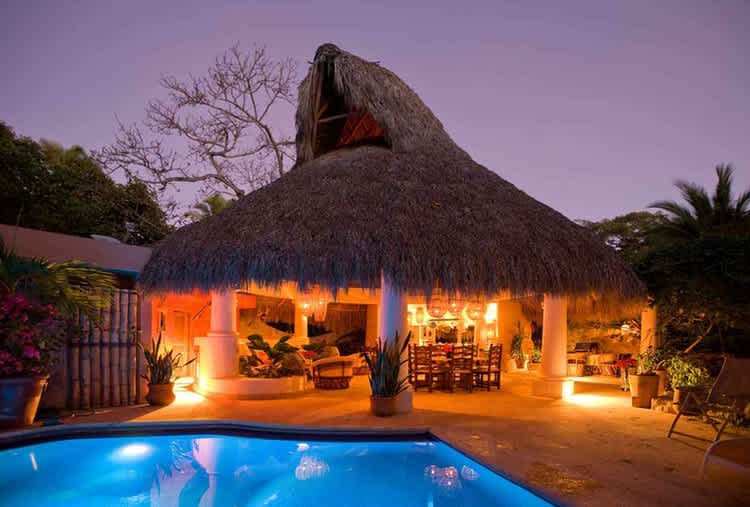

This was in winter of 2017. It was about this time that I went on a stunning vacation to the villas at Punta el Custodio, in Mexico. While relaxing under the cabana of Casa Colibri, I cranked out most of the code for the protoreflect library.

Casa Colibri main living area, viewed from bathing pool. Photos courtesy of villanayarit.com

During the periods of relaxing downtime, including times when it rained, I managed some highly-focused coding, finishing an alpha-quality version of both descriptors and dynamic messages. I also did what other sane humans do on vacation, of course: spending time with the family at the beach and in the pool, making tropical drinks, and eating delicious local cuisine.

When I returned from vacation (refreshed and invigorated!), we found numerous usages for descriptors, not just in building a dynamic gRPC client. For example, one feature of the protobuf IDL is the ability to add options to almost any element. Options are structured and strongly-typed metadata, very similar to annotations in Java. Nearly every element in the protobuf IDL can have options: files, enums, messages, fields, RPC methods, etc.

You create custom options by creating extensions for the various *Options types defined in google/protobuf/descriptor.proto:

You can then refer to those options to annotate elements in your other source files:

Early on, we began using protoreflect to examine RPC method options so that we could statically declare things like authorization policies (e.g., 'this method requires these scopes'), right in the methods’ definitions in proto source. We could then use a gRPC interceptor (i.e., middleware) to examine the options and enforce the policy, before the request even makes it to the method’s handler function. (If you’re curious how a gRPC server interceptor in Go can access method descriptors, in order to examine method options and apply policy, you might be interested in the code snippet in this thread, which provides a little guidance.)

Introducing gRPCurl

We began our stroll down “Reflection Road” to implement a dynamic client for our Commander tool. But it turns out that the same building blocks needed to do this could also be used to build a dynamic client that could talk to any gRPC server, not just Commandant.

Since we’d chosen to double-down on gRPC, we wanted a simple way to do exploratory testing or debugging of our services and even scripting of actions, much in the way you’d use cURL for standard web servers.

The main gRPC repo contains a simple tool that is similar, grpc_cli, but it has its drawbacks. One is that it does not support streaming; another is that every invocation would be very verbose and error-prone, needing to define command-line options for metadata that would authenticate requests to our gRPC servers. So we wanted a command-line program that would be easy to recompose into a custom Fullstory-specific tool that could handle our service-to-service authentication. For this, it should be in Go, so that we can simply link in our existing authentication package.

So I stitched the same protoreflect components together to make a general-purpose tool, and thus was born gRPCurl. The root package ("github.com/fullstorydev/grpcurl") can be used as a library, providing a simple API for doing dynamic RPC. The repo also includes a command-line interface that provides a generic dynamic gRPC tool:

# fetch the repo

$ go get github.com/fullstorydev/grpcurl

# install the grpcurl command-line program

$ go install github.com/fullstorydev/grpcurl/cmd/grpcurl

# spin up a test server included in the repo

$ go install github.com/fullstorydev/grpcurl/testing/cmd/testserver

$ testserver -p 9876 >/dev/null &

# and take grpcurl for a spin

$ grpcurl -plaintext localhost:9876 list

grpc.reflection.v1alpha.ServerReflection

grpc.testing.TestService

$ grpcurl -plaintext localhost:9876 list grpc.testing.TestService

grpc.testing.TestService.EmptyCall

grpc.testing.TestService.FullDuplexCall

grpc.testing.TestService.HalfDuplexCall

grpc.testing.TestService.StreamingInputCall

grpc.testing.TestService.StreamingOutputCall

grpc.testing.TestService.UnaryCall

$ grpcurl -plaintext localhost:9876 describe \

grpc.testing.TestService.UnaryCall

grpc.testing.TestService.UnaryCall is a method:

rpc UnaryCall ( .grpc.testing.SimpleRequest ) returns

(.grpc.testing.SimpleResponse );

# if no request data specified, an empty request is sent

$ grpcurl -emit-defaults -plaintext localhost:9876 \

grpc.testing.TestService.UnaryCall

{

"payload": null,

"username": "",

"oauthScope": ""

}

$ grpcurl -plaintext -msg-template localhost:9876 \

describe grpc.testing.SimpleRequest

grpc.testing.SimpleRequest is a message:

message SimpleRequest {

.grpc.testing.PayloadType response_type = 1;

int32 response_size = 2;

.grpc.testing.Payload payload = 3;

bool fill_username = 4;

bool fill_oauth_scope = 5;

.grpc.testing.EchoStatus response_status = 7;

}

Message template:

{

"responseType": "COMPRESSABLE",

"responseSize": 0,

"payload": {

"type": "COMPRESSABLE",

"body": ""

},

"fillUsername": false,

"fillOauthScope": false,

"responseStatus": {

"code": 0,

"message": ""

}

}

$ grpcurl -emit-defaults -plaintext \

-d '{"payload":{"body":"abcdefghijklmnopqrstuvwxyz01"}}' \

localhost:9876 grpc.testing.TestService.UnaryCall

{

"payload": {

"type": "COMPRESSABLE",

"body": "abcdefghijklmnopqrstuvwxyz01"

},

"username": "",

"oauthScope": ""

}

All of our servers at Fullstory expose the gRPC reflection service, which greatly simplifies using gRPCurl. But, in case you want to use it against a server that does not support reflection, you can also point gRPCurl at compiled file descriptors or even at protobuf source files.

At Fullstory, we now use gRPCurl to poke at staging and even production backends. This is particularly useful in cases where we have gRPC APIs for specific activities (usually advanced administration stuff) but no corresponding web UI. Our internal tool uses all of the same guts as the open-source command; it just tweaks some default values for command-line options and also knows how to authenticate with Fullstory backend servers.